Beyond the Pancreas: Inside the World’s Largest Medical AI Experiment

As the world’s biggest tech companies race to integrate AI into healthcare, some of the most ambitious experiments aren’t happening in Silicon Valley—they’re happening in Chinese hospitals.

In early 2026, The New York Times published a sweeping feature titled “In China, A.I. Is Finding Deadly Tumors That Doctors Might Miss.”

The piece zeroed in on a hospital in Ningbo, a coastal city in China’s Zhejiang province, where an AI model called PANDA (Pancreatic Cancer Detection with AI) was catching early-stage pancreatic cancer signals on routine, non-contrast CT scans—signals so faint that even trained human eyes routinely miss them. In the story, the technology saved the life of a retired bricklayer.

The reporting marveled at how scientists had leveraged massive datasets to crack a problem that had long stumped the medical world: large-scale early screening for pancreatic cancer.

As it happens, we established a deep relationship with the Alibaba medical AI team behind this project back in 2023, and have been tracking the effort—codenamed PANDA—for two consecutive years.

We didn’t just witness the journey from paper to deployment. We interviewed the project leads, frontline radiologists, and more. Our entire editorial team even went in person to experience the team’s newest gastric cancer AI screening model firsthand.

But as our reporting deepened, it became clear that the Times story only scratched the surface.

What we discovered, however, is that Alibaba isn’t stopping at pancreatic cancer screening. Their ultimate goal is something far more ambitious: “one scan, multiple screenings.”

In the future, patients may not need to schedule a dedicated cancer check at all. When an ordinary person walks into a hospital for a cold, lower back pain, or gallstones and gets a simple chest-abdomen CT, the AI will—beyond helping diagnose the relatively obvious abnormalities visible on a non-contrast scan—tirelessly sweep across the patient’s entire body in the background, completing early screening for pancreatic cancer, gastric cancer, aortic dissection, and more.

Put differently: what’s unfolding across these hospitals may amount to the largest medical AI experiment in human history.

A Unique Medical Landscape That Gave Medical AI Fertile Ground

Why is this happening in China? Here’s the short version: unlike most countries and regions, China’s healthcare system has an extraordinarily distinctive set of characteristics.

Infrastructure capacity is overflowing, while specialist attention is scarce—creating a massive scissors gap between the ocean of medical data being generated and the limited number of doctors available to interpret it.

First, in China, the barrier to accessing medical resources is remarkably low. Thanks to an overflow of infrastructure investment, even remote township health clinics now routinely operate 64-slice or even 128-slice CT machines around the clock. (For the uninitiated: “slices” refer to the number of detector rows along the Z-axis of a CT scanner. More rows generally mean faster imaging and finer detail. 64-slice and above is typically suited for dynamic organs like the heart and blood vessels.)

As of 2024, China has approximately 34 CT machines per million people. By comparison, OECD data puts the average among developed nations at roughly 27 per million. China’s per-capita CT availability already exceeds that of many advanced economies.

The cost is strikingly low, too. Even paying entirely out of pocket, a non-contrast chest and abdominal CT in China costs around 200 RMB—roughly $30.

Actual payment receipt from a Zhejiang hospital: ¥223 (~$30) for a chest-abdomen CT scan.

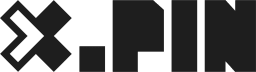

In the U.S., by contrast, ConsumerShield data puts the average total price for the same scan at over $1,000.

Average out-of-pocket CT scan costs in the United States. Source: ConsumerShield

Yet while China’s medical infrastructure has penetrated deep into smaller cities and towns, quality radiologist talent remains acutely scarce—and heavily concentrated in top-tier hospitals in first-tier cities like Beijing, Shanghai, and Guangzhou.

This imbalance drives a flood of patients with complex conditions toward elite urban hospitals, while many second- and third-tier cities find themselves stuck in an awkward limbo: cutting-edge equipment, but not enough skilled people to run it.

According to publicly available data, China has roughly 200,000 radiologists. But they face a staggering demand of nearly 8 billion imaging examinations per year. Chinese radiologists read an estimated 3 to 5 times more images annually than their counterparts in Europe and the U.S.

The convenience of the hardware has caused medical imaging data—CT scans in particular—to explode exponentially, while the corresponding supply of doctors is severely inadequate, and their reading capacity is stretched to the breaking point.

And so, within this medical reality that is not just different from Western countries but almost lopsided in its extremes, the Alibaba team chose a hell-mode starting point: use non-contrast CT to take on pancreatic cancer—one of the deadliest cancers in existence—and make large-scale early screening possible.

Using AI to Crack the Unsolvable Problem of Mass Pancreatic Cancer Screening

“How can non-contrast CT possibly see this? How did you clean your data? How do you control for false positives?”

Zhang Ling, the head of multi-cancer screening at Alibaba DAMO Academy’s Medical AI Lab, told us in an exclusive interview that when their paper—titled “Large-scale pancreatic cancer detection via non-contrast CT and deep learning”—was submitted to Nature Medicine, it was met with unprecedented skepticism.

The team shared a telling detail: during peer review, one exceptionally rigorous international reviewer fired off 58 pointed technical questions in a single round.

In the past, a pancreatic cancer diagnosis was practically a death sentence—nearly 90% of patients didn’t survive five years after diagnosis.

The pancreas is extraordinarily well-hidden, tucked behind the stomach and wrapped by the duodenum, spleen, and liver. In conventional medical wisdom, the idea of catching early-stage pancreatic cancer on a routine non-contrast CT was pure fantasy.

To truly see it, you need a contrast-enhanced CT—but that’s not only expensive (typically over 1,000 RMB, or roughly $145+), it also requires injecting contrast dye into the patient’s bloodstream. For patients with allergy histories or sensitivities, the risk of allergic reactions is relatively high. The dye can also worsen kidney function in patients with severe renal impairment.

This creates a real-world dilemma. Between the financial burden, the physical toll of allergies and radiation exposure (CT scans of the same body part are generally recommended no more than once every 3–6 months), and the strain on medical resources, large-scale pancreatic cancer screening simply isn’t feasible through conventional means.

If you can’t screen at scale, you don’t catch it. And if you don’t catch it, by the time symptoms appear, it’s almost always late-stage. That has been the longstanding deadlock around mass pancreatic cancer screening.

Facing the skeptics head-on, the Alibaba team responded to every challenge. The paper was not only published in Nature Medicine—the journal took the rare step of running an accompanying editorial commentary, titled: “AI and imaging-based cancer screening: getting ready for prime time.”

The accompanying Nature Medicine editorial commentary (November 2023).

Cao Kai, co-first author of the paper, told us that his drive to push this project through was rooted in a heartbreaking personal story.

His mentor—a top cancer researcher who had devoted his entire career to the disease—got annual checkups every year and still died of late-stage pancreatic cancer. After his mentor passed, Cao Kai pulled up the CT scan from a routine checkup ten months before the diagnosis. Looking back with the benefit of hindsight, he could barely make out a faint shadow lurking in the corner of the image.

If even a world-class expert couldn’t spot early pancreatic cancer on a non-contrast scan, what hope does an ordinary person have?

Later, he met Zhang Ling, the Alibaba team lead. They hit it off immediately and launched the PANDA project.

But the first obstacle was the dataset. Where would they get the massive volume of professionally annotated data they needed? The arrival of Jin Gang, deputy director of the Shanghai Institute of Pancreatic Diseases, was a lifeline.

They assembled 48 doctors from hospitals across the country, who collectively annotated abdominal CT images from over 3,000 patients. A large share of these cases came from the Shanghai Institute of Pancreatic Diseases, drawing on nearly a decade’s worth of data accumulated since its founding in 2015.

Every one of those 3,000-plus patient datasets was manually annotated by doctors, one by one. And because the contrast on non-contrast CT images is so low that manual annotation isn’t practical, the doctors performed all their annotations on contrast-enhanced CT images instead.

The annotation process itself was intensely specialized. Doctors had to trace the two-dimensional outline of the tumor on each layer of the CT scan—each scan containing ten to twenty-plus layers. Stack those 2D images together, and you get a 3D map of the entire tumor.

Multiple layers of CT scan images used in the annotation process.

With the training data ready, the next step was choosing and training the model.

The research team first built a registration algorithm to transfer the annotations from contrast-enhanced CT data onto the non-contrast CT images—teaching the AI to read non-contrast scans.

While 3,000 cases is considered quite a lot in the medical domain, for deep learning, it’s decidedly middling. So they doubled down on the model architecture and algorithms to compensate.

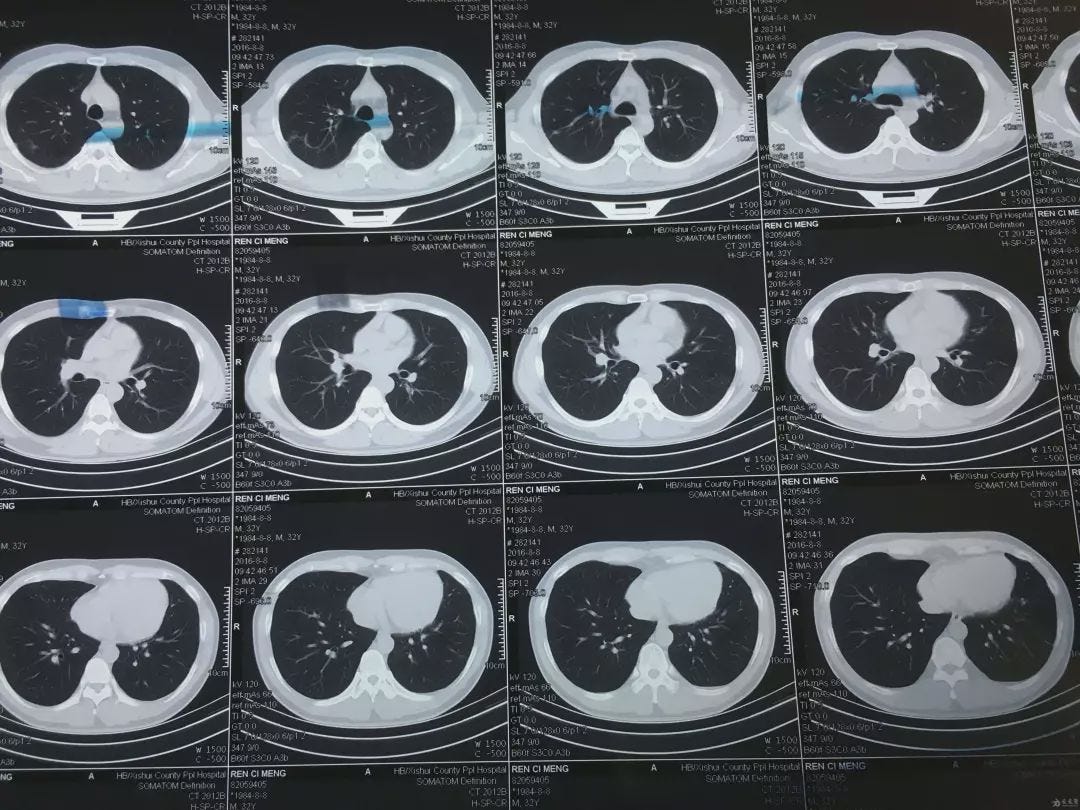

According to Zhang Ling, the team tried numerous technical approaches before arriving at a “hybrid” model that integrated segmentation, detection, and classification into a single framework.

The AI processing pipeline: from medical scan input to diagnostic output.

Here, we need to explain two concepts that are matters of life and death for medical AI: sensitivity and specificity.

Sensitivity measures how well the system catches true positives—the higher the sensitivity, the lower the chance of a missed diagnosis. Specificity measures how well it avoids false alarms—the higher the specificity, the lower the chance of a false positive.

In medical AI, these two metrics must be carefully balanced. If the AI is too “sensitive”—flagging every minor anomaly—you end up with an astronomical false positive rate.

During PANDA’s clinical trials, the research team and doctors went through a grueling three-month tug-of-war. The AI model at that point was hypersensitive to an extreme—it would even throw up alarms for fatty infiltration of the pancreas.

“If we had deployed that version in hospitals,” the R&D lead told us, “every day, a huge number of perfectly healthy patients would receive red high-risk warnings. They’d be thrown into a panic, hospitals would be overwhelmed with people rushing in for biopsies and needle aspirations. That wouldn’t just be a massive waste of medical resources—it would be an ethical catastrophe.”

In the end, they chose restraint. After countless cycles of error, feedback, and correction, they found that exquisitely delicate balance point. Today, PANDA’s sensitivity for detecting pancreatic cancer on non-contrast CT stands at 92.9%—meaning roughly 71 out of every 1,000 true patients would be missed. Its specificity reaches 99.9%—meaning out of every 1,000 healthy individuals, only one would be falsely flagged.

We also interviewed Dr. Zhu Kelei of Ningbo University Affiliated First Hospital—the same doctor mentioned in the New York Times piece—back in the first half of 2025.

Dr. Zhu told us that it was Jin Gang, the deputy director of the Shanghai Institute of Pancreatic Diseases, who introduced him to the project.

“As one of Shanghai’s most renowned pancreatic surgeons, he’d already achieved everything there was to achieve. He’s doing this purely out of conscience—it’s an act of virtue,” Dr. Zhu said of Jin Gang, describing him as someone with tremendous personal charisma and sense of responsibility.

Since November 2024, the PANDA model has been running at Dr. Zhu’s hospital, analyzing over 180,000 CT scans and helping doctors identify 24 cases of pancreatic cancer—14 of them early-stage.

“In just the last six months, thanks to this project, we’ve found 6 additional early-stage pancreatic cancer patients. These were people who came in for a CT because of a cold or a fever,” Dr. Zhu said.

But since the AI only generates background alerts, doctors must personally call these patients—people with zero psychological preparation—to notify them of the need for follow-up testing. “A lot of people think we’re scammers, or that we’re pushing unnecessary treatments to boost hospital revenue. We’re not only working overtime to review the scans the AI flags—we’re also enduring patients’ mistrust.”

“But no matter how much we’re misunderstood, when you see those 3 patients successfully come through surgery and recover, it’s all worth it,” Dr. Zhu said.

Striking While the Iron Is Hot: The Gastric Cancer Screening Project

With PANDA’s success under their belt, the Alibaba medical AI team pressed forward.

In June 2025, the team published another paper in Nature Medicine, titled “AI-based large-scale screening of gastric cancer from noncontrast CT imaging.”

The gastric cancer screening paper, published in Nature Medicine, June 2025.

Due to long-standing dietary habits in the region—high salt intake, consumption of pickled and preserved foods, and smoking—gastric cancer is disproportionately prevalent in East Asia.

According to WHO data, roughly 1 million new gastric cancer cases are diagnosed globally each year, with China accounting for about 37%. China sees 260,000 gastric cancer deaths and 360,000 new diagnoses annually, representing close to 40% of global gastric cancer fatalities.

But because large-scale comprehensive screening has been so difficult to implement—and the general public has a strong aversion to gastroscopy—China’s five-year gastric cancer survival rate sits at just 10–25%. By comparison, Japan’s rate is 64%, and South Korea’s is 70%.

This new paper announced that ordinary non-contrast CT could now be used to screen for gastric cancer.

We traveled to the Zhejiang Cancer Hospital to interview the paper’s two co-first authors: Dr. Hu Can from the hospital, and Dr. Xia Yingda from Alibaba DAMO Academy.

Dr. Hu told us that before this collaboration, his team had already spent the better part of a year manually tracing tumor locations on thousands of contrast-enhanced CT scans of gastric cancer patients—work done for a separate research project.

With all that annotated CT data already prepared, as Dr. Hu put it: “The ingredients were washed and chopped—it was just a matter of who’d step up to cook, and how.” So when he connected with the Alibaba team, they clicked immediately and dove into the research.

They leveraged a clinical reality: many confirmed gastric cancer patients had both non-contrast and contrast-enhanced CT scans on file. So they fed both types of scans to the AI, using image registration algorithms to precisely align each patient’s scans in three-dimensional space.

Now, every blurry non-contrast CT image in the AI’s training set had a precisely marked tumor location. Dr. Xia explained their hypothesis: while the human eye can’t see the difference between these scans, somewhere within the raw data—across the grayscale range from -1024 to 1024—the tumor regions must contain some subtle pattern.

“We honestly weren’t sure how well it would learn,” Xia admitted. But when the results came in, everyone was stunned.

After the first training round on several thousand cases, the accuracy was strikingly high. For extremely early-stage patients (T1), the model could catch 40% of potential cases. For slightly more advanced but still manageable early-stage cases (T2), detection rates exceeded 80%. This meant patients who would have almost certainly been missed now had a real shot at early treatment and cure.

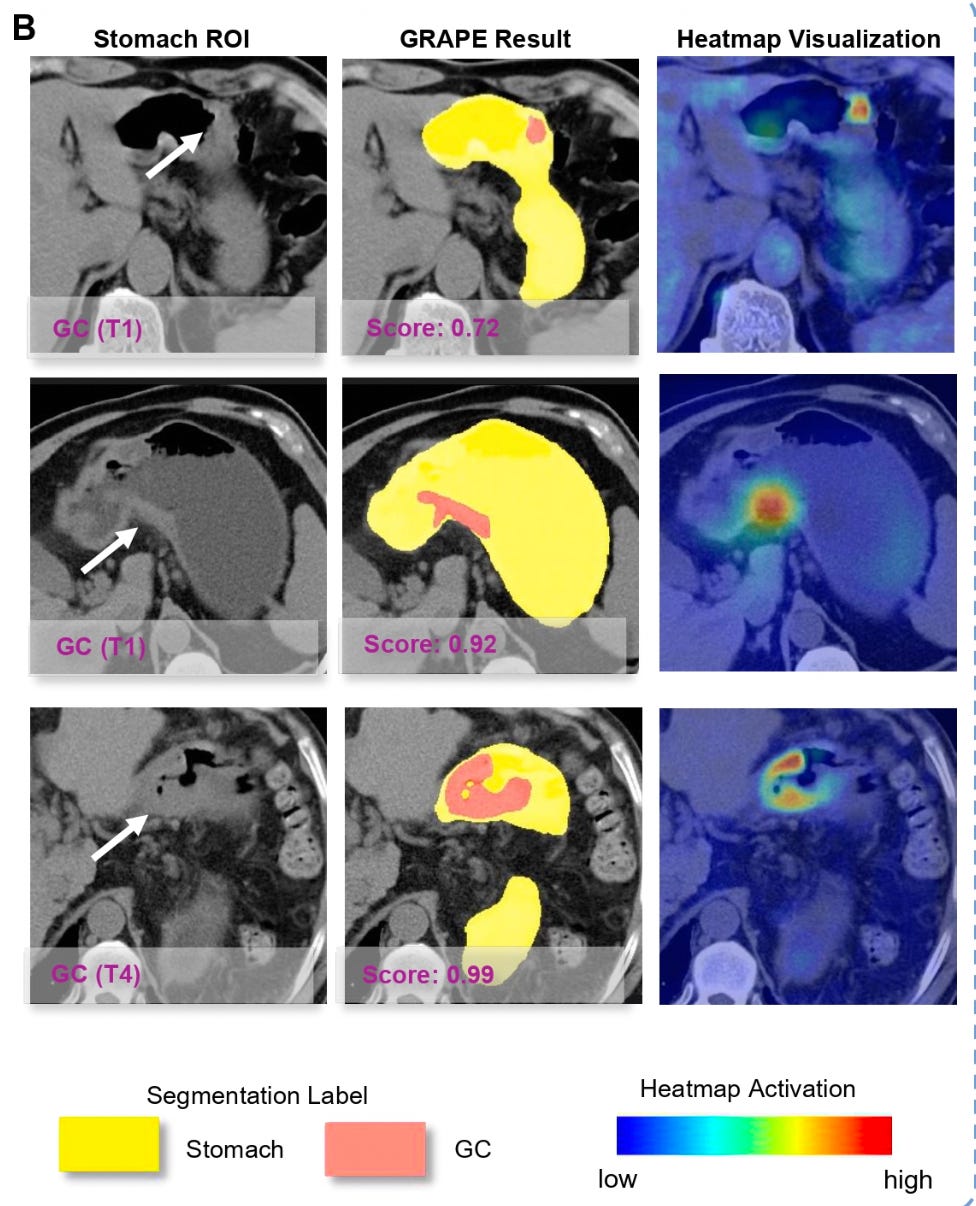

Buoyed by the results, the team launched validation across 16 centers nationwide on tens of thousands of cases. The AI model, named GRAPE, delivered impressive results on real-world data spanning over 70,000 people: a gastric cancer detection rate above 17.7%.

GRAPE model outputs: stomach region of interest, segmentation results, and heatmap visualization showing cancer detection across different stages (T1 and T4).

To put that in perspective: given China’s gastric cancer incidence of roughly 0.025%, if you randomly screened 10,000 people with gastroscopy, you might find 2 or 3 cases. But if you first ran them through the GRAPE model and only sent the “high-risk” group for gastroscopy, you’d find roughly 17 or more out of every 100 in that filtered cohort.

Dr. Hu shared a case that still haunts him.

He had a 45-year-old patient who came in because of difficulty swallowing. The diagnosis: late-stage gastric cancer. But Hu took an extra step—he pulled up the patient’s records from six months earlier. It turned out the patient had gotten an abdominal CT half a year prior for an unrelated issue.

The radiologist at the time had no reason to suspect gastric cancer, and the report came back normal. But when Dr. Hu fed that six-month-old scan into the AI model, within seconds, the screen flashed a red warning. The AI had clearly outlined a region in the stomach—early-stage gastric cancer.

“If you asked me to look at it now, I could maybe, just barely, make out some trace. But at the time, no one would have looked there, and no one would have caught it,” Hu said, his voice heavy with frustration.

If this AI had existed six months earlier, this patient’s fate would have been completely rewritten. A single CT scan, a single missed moment—the distance between life and death.

At the Zhejiang Cancer Hospital, the AI system was already running quietly on the hospital’s servers. Every day, more than 1,000 CT exams—any scan whose coverage included the stomach area—would be automatically analyzed. It was essentially a free gastric cancer screening add-on for every CT patient.

To improve their answer rate when calling flagged patients, the team even partnered with telecom carriers to display the cancer hospital’s official caller ID. Even so, many people’s first reaction was that it was a scam call.

Exasperating as that was, the team persisted—waiting every day for that one answered call, for the chance to tell a stranger: “We caught it early. Don’t be afraid.” When Dr. Hu told us these stories, his voice was calm, but his eyes were bright.

We also attended the project’s press conference. One of the paper’s co-authors—the head of gastric surgery at Zhejiang Cancer Hospital—was notably absent, because that day, he was in the operating room saving a patient.

Outrunning Death: Aortic Dissection Screening

In August 2025, the Alibaba medical AI team notched yet another breakthrough, publishing another paper in Nature Medicine announcing a model called iAorta.

The iAorta paper in Nature Medicine, published August 2025.

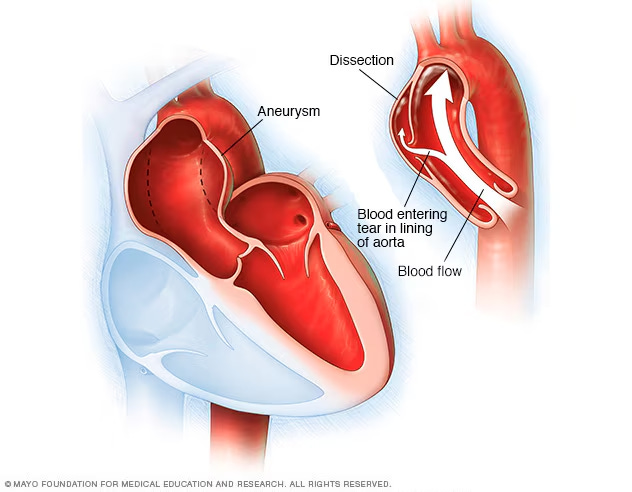

Aortic dissection occurs in the major blood vessels near the heart. It is an extremely dangerous acute condition—onset is rapid, and patients can die of cardiac arrest within minutes. In the past, diagnosing this emergency typically required a dedicated contrast-enhanced CT, a process that could eat up half a day or even a full day, easily missing the critical treatment window.

Anatomical illustration of aortic dissection. Source: Mayo Foundation for Medical Education and Research.

In their paper, the researchers looked back at 130,000 emergency chest-pain patients and found that 121 patients with acute aortic syndrome had been missed during initial assessment. But when the doctors fed those patients’ original non-contrast CTs into iAorta, 109 were correctly identified—the missed diagnosis rate plummeted from a staggering 48.8% to just 4.8%.

This means that if this model had existed at the time, over 95% of those hundred-plus patients could have been diagnosed and treated earlier.

Recently, at a chest pain center in a pilot hospital in Shanghai, in just two months, iAorta identified 21 aortic dissection patients out of 15,584 people who came through the doors—with a sensitivity of 95.5% and specificity of 99.4%.

For those 21 patients the AI caught, the average time to confirmed diagnosis was cut to a remarkable 1.7 hours. Every hour of earlier treatment means a 1–2% improvement in survival odds.

One case involved a 43-year-old man who had endured upper abdominal pain for 12 hours before coming to the hospital. The initial suspicion was gallstones, so the doctor ordered a non-contrast abdominal CT. The scan had barely finished—the results hadn’t even reached the doctor—when iAorta fired off a red alert. The attending physician immediately ordered a contrast-enhanced CTA, which confirmed aortic dissection.

From admission to confirmed diagnosis: 94 minutes.

Without the AI, this man would likely have continued down the gallstone treatment pathway, drifting further from the correct diagnosis. The AI physically yanked him off death’s track—and saved an entire family along with him.

Today, the AI has been deployed across the first batch of 10 hospitals in Zhejiang province. After the system was deployed at Shaoxing Central Hospital, township health clinics under its jurisdiction began uploading scan data in real time. The cloud-based AI flags any concerns immediately, and patients can be transferred to the central hospital at once. A “15-minute rescue radius” was born.

Dr. Zhang Hongkun, a department chief at the First Affiliated Hospital of Zhejiang University, put it this way: “AI will never replace doctors. But if young doctors have to learn through the agony of missed diagnoses, the price is simply too high. What I want to see is AI giving more doctors the confidence to catch what they otherwise might not.”

Not a Solo Act

In recent months, DAMO Academy’s non-contrast CT “one scan, multiple screenings” initiative has continued to produce results.

In January 2026, the DAMO Academy team partnered with Fudan University to extend AI research into lymphoma, laryngeal cancer, and hypopharyngeal cancer. In February 2026, they collaborated with the Shengjing Hospital of China Medical University and other institutions to develop MAOSS, a fatty liver screening AI model that uses non-contrast CT imaging and serum markers to simultaneously assess hepatic steatosis and stage liver fibrosis—a first of its kind.

And they’re not alone. Huawei is developing a “medical pathology foundation model.” In traditional pathology departments, doctors spend hours at microscopes hunting for abnormal cells. Huawei is trying to use AI to conquer those massive pathology slides, finding cancer cells hiding in forgotten corners.

Tencent’s “Miying” project is piloting across grassroots hospitals, building another early screening network that spans esophageal cancer to glaucoma.

iFlytek’s “AI Medical Assistant” has been rolled out to township health clinics, serving as a real-time support system for primary care doctors—cross-checking diagnoses on the spot and reducing misdiagnosis and missed diagnoses at the village and township level.

The New York Times piece sparked intense discussion partly because an experiment of this scale—AI running silently in the background of routine scans at hundreds of hospitals, screening millions of patients for diseases they didn’t know to look for—has no real parallel elsewhere.

That’s not a statement about who has better technology. It’s a consequence of a very specific set of conditions: abundant and affordable imaging hardware, an overwhelming volume of patients, and a severe shortage of specialist attention. In that gap, these AI models found their purpose.

And at the end of the day, what matters isn’t the grand narrative. It’s the 43-year-old man who walked in thinking he had gallstones and walked out with a life-saving diagnosis. It’s the retired bricklayer in Ningbo. It’s the phone call from a stranger that says: “We caught it early. Don’t be afraid.”